Table of contents

- Introduction

- The correlations function and its arguments

- Computing correlation coefficients using the command line

- Computing correlation coefficients using the GUI

Introduction

The lsa.corr function computes Pearson product-moment or Spearman rank-order correlation coefficients between variables within groups of respondents defined by splitting variables. The splitting variables are optional. If no splitting variables are provided, the results will be computed on country level only. If splitting variables are provided, the data within each country will be split into groups by all splitting variables and the correlation coefficients will be computed by the categories of the last splitting variable. Note that the correlation coefficients can be computed between background/contextual variables, between background/contextual variables and sets of PVs, or between sets of PVs, taking into account the complex sampling and assessment design of the study of interest. When a background/contextual variable is correlated with a set of PVs, the correlation coefficients will be computed between the background contextual variable and each PV in a set, and then the estimates for all PVs in the set will be averaged and their standard error computed using complex formulas which will depend on the study of interest. When two sets of PVs are correlated, the first PV in the first set will be correlated with the first PV in the second set, then the second PV in the first set will be correlated with the second PV in the second set, and so on. At the end, the estimates will be averaged and their standard error computed using complex formulas which will depend on the study of interest. Whatever the estimate is, the standard error will be computed taking into account the complex sampling and assessment designs of the studies. Refer here for a short overview on the complex sampling and assessment designs of large-scale assessments and surveys. If interested in more in-depth details on the complex sampling and assessment designs of a particular study and how estimates and their standard errors are computed, refer to its technical documentation and user guide.

Like any other function in the RALSA package, the lsa.corr function can recognize the study data and apply the correct estimation techniques given the study sampling and assessment design implementation without extra care.

The correlations function and its arguments

The lsa.corr function has the following arguments:

data.file– The file containinglsa.dataobject. Either this ordata.objectshall be specified, but not both.data.object– The object in the memory containinglsa.dataobject. Either this ordata.fileshall be specified, but not both.split.vars– Categorical variable(s) to split the results by. If no split variables are provided, the results will be for the overall countries’ populations. If one or more variables are provided, the results will be split by all but the last variable and the percentages of respondents will be computed by the unique values of the last splitting variable.bckg.corr.vars– Names of continuous background or contextual variables to compute the correlation coefficients for. The results will be computed by all groups specified by the splitting variables.PV.root.corr– The root names for the sets of plausible values to compute the correlation coefficients for.corr.type– String of length one, specifying the type of the correlations to compute, either"Pearson"(default) or"Spearman".weight.var– The name of the variable containing the weights. If no name of a weight variable is provide, the function will automatically select the default weight variable for the provided data, depending on the respondent type.DF.type– The method for the degrees of freedom shall be computed, either"JR"(default) or"WS". See details.include.missing– Logical, shall the missing values of the splitting variables be included as categories to split by and all statistics produced for them? The default (FALSE) takes all cases on the splitting variables without missing values before computing any statistics.shortcut– Logical, shall the “shortcut” method for IEA TIMSS, TIMSS Advanced, TIMSS Numeracy, eTIMSS, PIRLS, ePIRLS, PIRLS Literacy and RLII be applied? The default (FALSE) applies the “full” design when computing the variance components and the standard errors of the estimates.save.output– Logical, shall the output be saved in MS Excel file (default) or not

#’ (printed to the console or assigned to an object).output.file– Full path to the output file including the file name. If omitted, a file with a default file name “Analysis.xlsx” will be written to the working directory (getwd()).open.output– Logical, shall the output be open after it has been written? The default (TRUE) opens the output in the default spreadsheet program installed on the computer.

Notes:

- Either

data.fileordata.objectshall be provided as source of data. If both of them are provided, the function will stop with an error message. - The function computes correlation coefficients by the categories of the splitting variables. The percentages of respondents in each group are computed within the groups specified by the last splitting variable. If no splitting variables are added, the results will be computed only by country.

- Multiple continuous background variables and/or sets of plausible values can be provided to compute correlation coefficients for. Please note that in this case the results will slightly differ compared to using each pair of the same background continuous variables or PVs in separate analysis. This is because the cases with the missing values are removed in advance and the more variables are provided to compute correlations for, the more cases are likely to be removed. That is, the function support only listwisie deletion.

- Computation of correlation coefficients involving plausible values requires providing a root of the plausible values names in

PV.root.corr. All studies (except CivED, TEDS-M, SITES, TALIS and TALIS Starting Strong Survey) have a set of PVs per construct (e.g. in TIMSS five for overall mathematics, five for algebra, five for geometry, etc.). In some studies (say TIMSS and PIRLS) the names of the PVs in a set always start with character string and end with sequential number of the PV. For example, the names of the set of PVs for overall mathematics in TIMSS are BSMMAT01, BSMMAT02, BSMMAT03, BSMMAT04 and BSMMAT05. The root of the PVs for this set to be added toPV.root.corrwill be “BSMMAT”. The function will automatically find all the variables in this set of PVs and include them in the analysis. In other studies like OECD PISA and IEA ICCS and ICILS the sequential number of each PV is included in the middle of the name. For example, in ICCS the names of the set of PVs are PV1CIV, PV2CIV, PV3CIV, PV4CIV and PV5CIV. The root PV name has to be specified inPV.root.corras “PV#CIV”. More than one set of PVs can be added. Note, however, that providing multiple continuous variables for thebckg.avg.corrargument and multiple PV roots for thePV.root.corrargument will affect the results for the correlation coefficients for the PVs because the cases with missing on bckg.corr.vars will be removed and this will also affect the results from the PVs (i.e. listwise deletion). On the other hand, using only sets of PVs to correlate should not affect the results on any PV estimates because PVs shall not have any missing values. - A sufficient number of variable names (background/contextual) or PV roots have to be provided – either two background variables, or two PV roots, or mixture of them with total length of two (i.e. one background/contextual variable and one PV root).

- If

include.missing = FALSE(default), all cases with missing values on the splitting variables will be removed and only cases with valid values will be retained in the statistics. Note that the data from the studies can be exported in two different ways: (1) setting all user-defined missing values to NA; and (2) importing all user-defined missing values as valid ones and adding their codes in an additional attribute to each variable. If theinclude.missingis set toFALSE(default) and the data used is exported using option (2), the output will remove all values from the variable matching the values in itsmissingsattribute. Otherwise, it will include them as valid values and compute statistics for them. - The shortcut argument is valid only for TIMSS, TIMSS Advanced, TIMSS Numeracy, PIRLS, ePIRLS, PIRLS Literacy and RLII. Previously, in computing the standard errors, these studies were using 75 replicates because one of the schools in the 75 JK zones had its weights doubled and the other one has been taken out. Since TIMSS 2015 and PIRLS 2016 the studies use 150 replicates and in each JK zone once a school has its weights doubled and once taken out, i.e. the computations are done twice for each zone. For more details see the technical documentation and user guides for TIMSS 2015, and PIRLS 2016. If replication of the tables and figures is needed, the shortcut argument has to be changed to

TRUE. - The function provides two-tailed t-test and p-values for the correlation coefficients.

- TIMSS Longitudinal has two points of administration with the same sample of schools and, respectively, students. The teachers however, are not necessarily the same teachers in both administrations. When grade 4 teacher data is merged to student data, there are two sets of mathematics and science weights – one for the first year and one for the second year of administration. For grade 8, mathematics and science teachers have to be merged separately to student data, each having their own weights for the first and second year of administration. When analyses are performed, the first available weight is chosen as default. Student questionnaire items are available as two separate sets, one per year of administration. It is up to the analyst to choose the proper combination of items and weights for a particular analysis. For more details on the structure of the TIMSS Longitudinal database, see the TIMSS 2023 Longitudinal User Guide for the International Databse.

The output produced by the function is stored in MS Excel workbook. The workbook has three sheets. The first one (“Estimates)” will have the following columns, depending on what kind of variables were included in the analysis:

- <Country ID> – a column containing the names of the countries in the file for which statistics are computed. The exact column header will depend on the country identifier used in the particular study.

- <Split variable 1>, <Split variable 2>… – columns containing the categories by which the statistics were split by. The exact names will depend on the variables in

split.vars. - n_Cases – the number of cases in the sample used to compute the statistics.

- Sum_<Weight variable> – the estimated population number of elements per group after applying the weights. The actual name of the weight variable will depend on the weight variable used in the analysis.

- Sum_<Weight variable>_SE – the standard error of the the estimated population number of elements per group. The actual name of the weight variable will depend on the weight variable used in the analysis.

- Percentages_<Last split variable> – the percentages of respondents (population estimates) per groups defined by the splitting variables in

split.vars. The percentages will be for the last splitting variable which defines the final groups. - Percentages_<Last split variable>_SE – the standard errors of the percentages from above.

- Variable – the variable names (background/contextual or PV root names) to be matched against the rows of the following columns, forming the correlation matrices together.

- Correlation_<Background variable> – the correlation coefficient of each continuous <Background variable> specified in

bckg.corr.varsagainst itself and each of the variables in the column “Variable”. There will be one column with correlation coefficient estimate for each variable specified inbckg.corr.varsand/or set of PVs specified inPV.root.corr. - Correlation_<Background variable>_SE – the standard error of the correlation of each continuous <Background variable> specified in

bckg.corr.vars. There will be one column with the SE of the correlation coefficient estimate for each variable specified inbckg.corr.varsand/or set of PVs specified inPV.root.corr. - Correlation_<root PV> – the correlation coefficient of each set of PVs specified as PV root name in

PV.root.corragainst itself and each of the variables in the column “Variable”. There will be one column with correlation coefficient estimate for each set of PVs specified inPV.root.corrand each other set of PVs specified inPV.root.corrand/or each continuous background variable specified inbckg.corr.vars. - Correlation_<root PV>_SE – the standard error of the correlation of each set of PVs specified as PV root name in

PV.root.corr. There will be one column with the SE of the correlation coefficient estimate for each set of root PVs specified inPV.root.corrand another set of PVs specified inPV.root.corrand/or each continuous background variable specified inbckg.corr.vars. - Correlation_<root PV>_SVR – the sampling variance component for the correlation of the PVs with the same <root PV> specified in

PV.root.corr. There will be one column with the sampling variance component for the correlation coefficient estimate for each set of PVs specified inPV.root.corrwith the other variables (other sets of PVs or background/contextual variables). - Mean_<root PV>_MVR – the measurement variance component for the correlation of the PVs with the same <root PV> specified in

PV.root.corr. There will be one column with the measurement variance component for the correlation coefficient estimate for each set of PVs specified inPV.root.corrwith the other variables (other sets of PVs or background/contextual variables). - Correlation_<Background variable>_SVR – the sampling variance component for the correlation of the particular background variable with a set of PVs specified in

PV.root.corrit is correlated with. There will be one column with the sampling variance component for the average estimate for each background/contextual variable correlated with a set of PVs specified inPV.root.corr. - Correlation_<Background variable>_MVR – the measurement variance component for the correlation of the particular background variable PVs with a set of PVs specified in

PV.root.corr. There will be one column with the measurement variance component for the correlation coefficient estimate for each background/contextual variable correlated with a set of PVs specified inPV.root.corr. - t_<root PV> – the t-test value for the correlation coefficients of a set of PVs when correlating them with other variables (background/contextual or other sets of PVs).

- t_<Background variable> – the t-test value for the correlation coefficients of background variables when correlating them with other variables (background/contextual or other sets of PVs).

- p_<root PV> – the p-value for the correlation coefficients of a set of PVs when correlating them with other variables (background/contextual or other sets of PVs).

- p_<Background variable> – the p-value value for the correlation coefficients of background variables when correlating them with other variables (background/contextual or other sets of PVs).

The second sheet (“Analysis information”) contains some additional information related to the analysis per country in the following columns:

- DATA – used

data.fileordata.object. - STUDY – which study the data comes from.

- CYCLE – which cycle of the study the data comes from.

- WEIGHT – which weight variable was used.

- DESIGN – which resampling technique was used (JRR or BRR).

- SHORTCUT – logical, whether the shortcut method was used.

- NREPS – how many replication weights were used.

- ANALYSIS_DATE – on which date the analysis was performed.

- START_TIME – at what time the analysis started.

- END_TIME – at what time the analysis finished.

- DURATION – how long the analysis took in hours, minutes, seconds and milliseconds.

The third sheet (“Calling syntax”) contains the call to the function with values for all parameters as it was executed. This is useful if the analysis needs to be replicated later.

Computing correlation coefficients using the command line

In the examples that follow we will merge a new data file (see how to merge files here) with student and school principal data from PIRLS 2016 (Australia and Slovenia), taking all variables from both file types:

lsa.merge.data(inp.folder = "C:/temp",

file.types = list(acg = NULL, asg = NULL),

ISO = c("aus", "svn"),

out.file = "C:/temp/merged/PIRLS_2016_ACG_ASG_merged.RData")

As a start, let’s compute the correlation coefficient between two background scales – Students’ Sense of School Belonging (ASBGSSB) and Students Being Bullied (ASBGSB) in Australia and Slovenia (check the PIRLS 2016 technical documentation on how these scales are constructed and their properties):

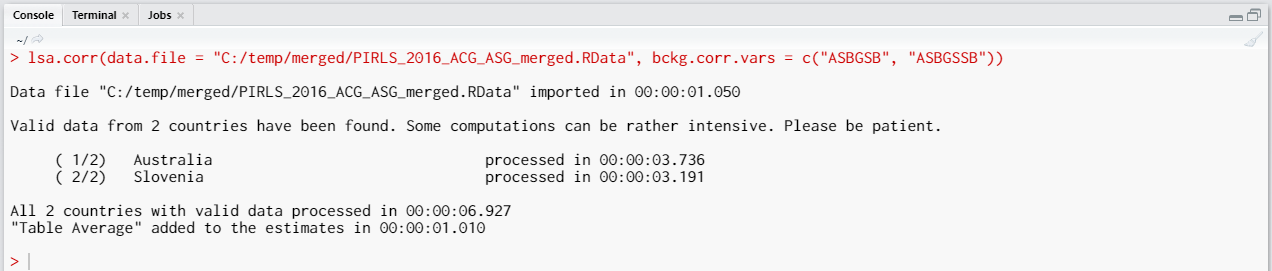

lsa.corr(data.file = "C:/temp/merged/PIRLS_2016_ACG_ASG_merged.RData",

bckg.corr.vars = c("ASBGSB", "ASBGSSB"))

Few things to note:

- More than two background variables and/or sets of PVs can be correlated at a time. The correlation coefficients will be computed for each pair separately. However, note that all cases with missing values on all variables will the removed in advance. This can change the results compared to executing the analyses for each pair separately, as explained here.

- In international large-scale assessments all analyses must be done separately by country. There is no need, however, to add the country ID variable (IDCNTRY, or CNT in PISA) as a splitting variable. The function will identify it automatically and add it to the the vector of

split.vars. - The default correlation method is the Pearson product-moment correlation and it will be used if the

corr.typeargument is not specified. - There is no need to specify the weight variable explicitly. If no weight variable is specified explicitly, then the default weight (total student weight in this case) will be used for the data set depending on the merged respondents’ data, it is identified automatically. If you have a good reason to change the weight variable, you can do so by adding the

weight.var = "SENWGT", for example. - The

DF.typecontrols which method shall be used to compute the degrees of freedom (DF) for the t-test statistic when computing the p-values. As of now, the function accepts"WS"(Welch-Satterthwaite approximation, see Satterthwaite, 1946; Welch, 1947) and"JR"(Johnson-Rust correction, see see Johnson & Rust, 1992) as values of theDF.typeargument to estimate the effective DF. The default is"JR"and it is recommended to use it. - Unless explicitly adding

save.output = FALSE, the output will be written to MS Excel on the disk. Otherwise, the output will be printed to the console. - If no output file is specified, then the output will be saved with “Analysis.xlsx” file name under the working directory (can be obtained with

getwd()). - Unless explicitly adding

open.output = FALSE, to the calling syntax, the output file will be opened after all computations are finished. This is useful when multiple calling syntaxes for different analyses are executed and no immediate inspection of the output is needed.

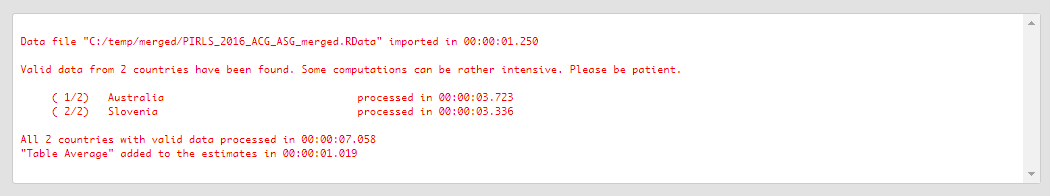

Executing the code from above will print the following output in the RStudio console:

When all operations are finished the output will be written on the disk as MS Excel workbook. If open.output = TRUE (default), the file will be open in the default spreadsheet program (usually MS Excel). Refer to the explanations on the structure of the workbook, its sheets and the columns here.

Let’s compute the correlation between Students Being Bullied complex background scale (ASBGSB, check the PIRLS 2016 technical documentation on how this scale is constructed and its properties) and the set of PVs for overall student reading achievement:

lsa.corr(data.file = "C:/temp/merged/PIRLS_2016_ACG_ASG_merged.RData",

bckg.corr.vars = "ASBGSB", PV.root.corr = "ASRREA")

The calling syntax from above is similar to the previous one, but it has just one background variable (ASBGSB, Students Being Bullied) and adds the PV.root.corr argument and its value, the PVs for overall student achievement. Note how the PVs are specified. The five PVs for the overall reading achievement are ASRREA01, ASRREA02, ASRREA03, ASRREA04, and ASRREA05. In the PV.root.corr argument we need to specify only the root of the PVs, “ASRREA”. The function will use this root/common name to select all five PVs and include them in the computations. For more details on the PV roots (also for the PV roots for studies other than TIMSS and PIRLS and their additions), the computational routines involving PVS, see here.

Executing the syntax from above will overwrite the previous output because it has the same file name defined (a warning will be displayed in the console). The columns in the “Estimates” sheet will now be different. For the meaning of the column names, refer to the list here.

Computing correlation coefficients using the GUI

To start the RALSA user interface, execute the following command in RStudio:

ralsaGUI()

For the examples that follow, merge a new file with PIRLS 2016 data for Australia and Slovenia (Slovenia, not Slovakia) taking all student and school principal variables. See how to merge data files here. You can name the merged file PIRLS_2016_ACG_ASG_merged.RData.

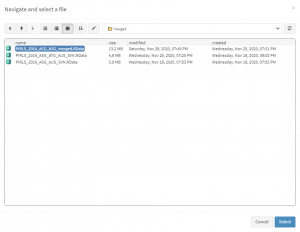

When done merging the data, select Analysis types > Correlations from the menu on the left. When navigated to the Correlations in the GUI, click on the Choose data file button. Navigate to the folder containing the merged PIRLS_2016_ACG_ASG_merged.RData file, select it and click the Select button.

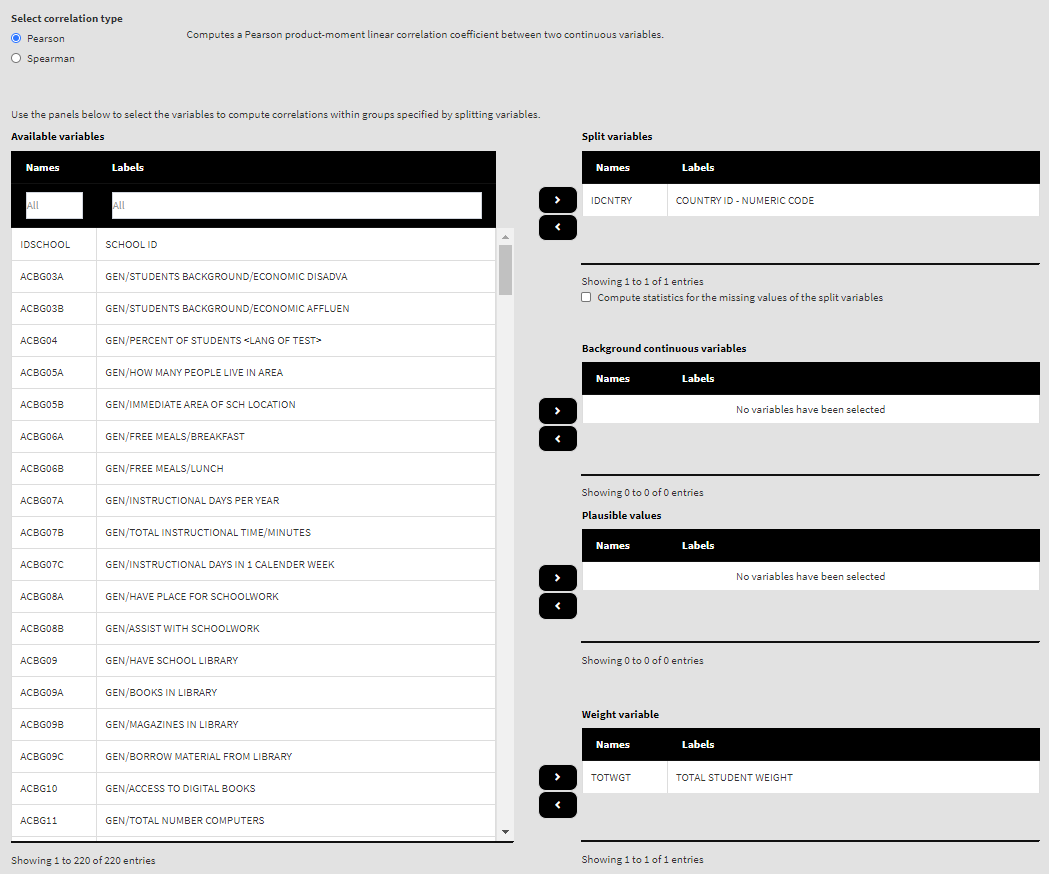

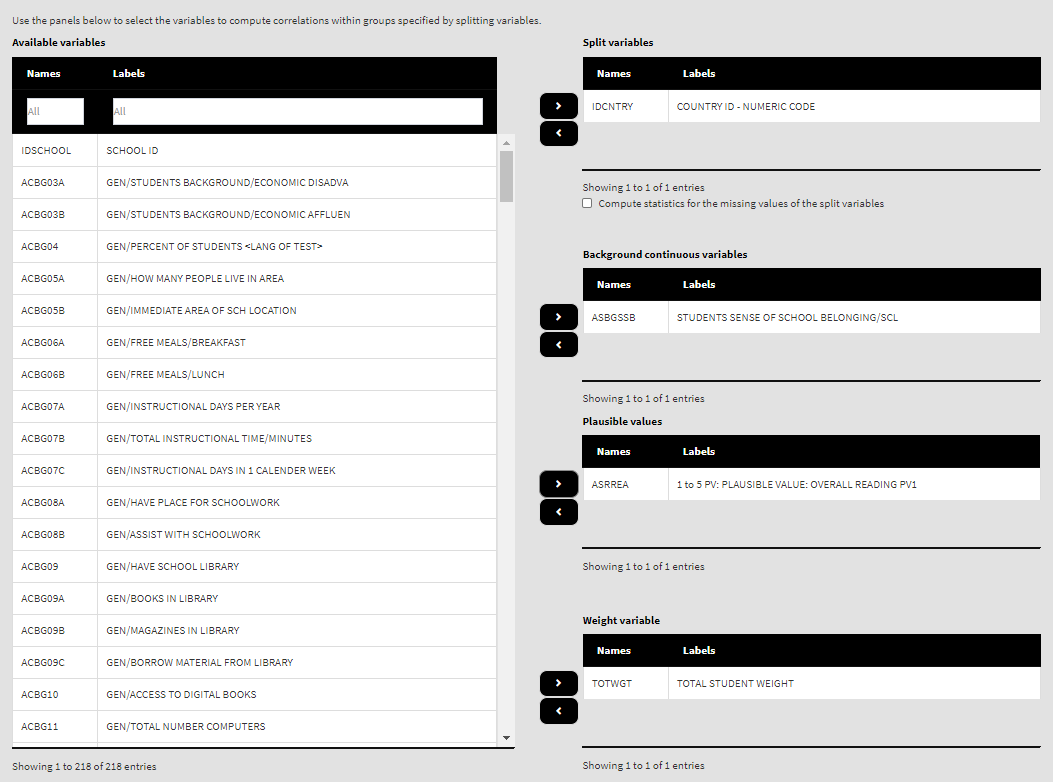

Once the file is loaded, you will see a panel on the left (available variables) and set of panels on the right where variables from the list of available ones can be added. Above the panels you will also see information about the loaded file and radio buttons to select the type of correlations from – Pearson or Spearman. We are going to compute correlation coefficients between continuous variables, so let’s leave the chosen option to the default, Pearson.

Use the mouse to select variables from the list of Available variables and the arrow buttons in the middle of the screen to add them to different fields (or remove them) to make the settings for the analysis. You can use the filter boxes on the top of the panels to find the needed variables quickly.

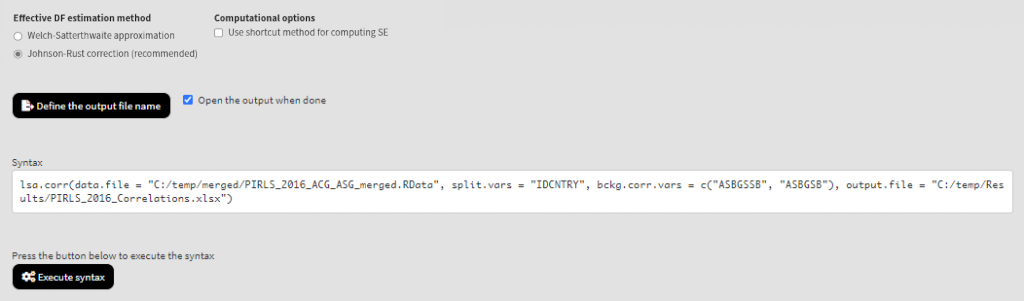

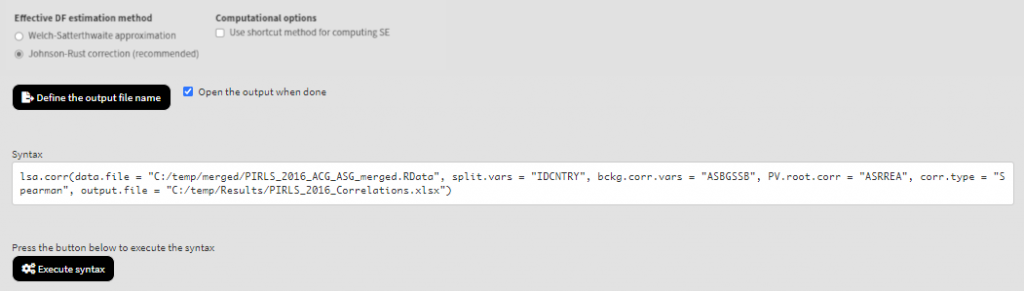

As a start, let’s compute the correlation coefficient between two background scales – Students’ Sense of School Belonging (ASBGSSB) and Students Being Bullied (ASBGSB) in Australia and Slovenia (check the PIRLS 2016 technical documentation on how these scales are constructed and their properties). Select variables ASBGSSB and ASBGSB from the list of Available variables and add them to the list of Background continuous variables using the right arrow button. This is all that needs to be done. Scroll down and click on the Define output file name. Navigate to the folder C:/temp/Results (or to the folder where you want to save the output) and define the output file name. After you do so, a checkbox will appear next to the Define the output file name. If ticked, the output will open after all computations are finished. Underneath the calling syntax will be displayed. Under all of these the Execute syntax button will be displayed. The final settings in the lower part of the screen should look like this:

Click on the Execute syntax button. The GUI console will appear at the bottom and will log all completed operations:

Few things to note:

- More than two background variables and/or sets of PVs can be correlated at a time. The correlation coefficients will be computed for each pair separately. However, note that all cases with missing values on all variables will the removed in advance. This can change the results compared to executing the analyses for each pair separately, as explained here.

- In international large-scale assessments all analyses must be done separately by country. The country ID variable (IDCNTRY, or CNT in PISA) is always selected as the first splitting variable and cannot be removed from the Split variables panel.

- The default correlation method is the Pearson product-moment correlation (correlating continuous variables). If categorical (ordinal) variables need to be correlated, the Spearman rank-order correlation method should be preferred..

- The default weight variable is selected and added automatically in the Weight variable panel. It can be changed with another weight variable available in the data set. If the default weight variable is selected, it will not be shown in the syntax window. If no weight variable is selected in the Weight variable panel, the default one will be used automatically.

- The Use shortcut method for computing SE checkbox is not ticked by default. This will make the function to compute the standard error using the “full” method for the sampling variance component. For more details see here and here.

If the Open the output when done checkbox is ticked, the output will open automatically in the default spreadsheet program (usually MS Excel) when all computations are completed. Refer to the explanations on the structure of the workbook, its sheets and the columns here.

Let’s compute the correlation between Students Being Bullied complex background scale (ASBGSB, check the PIRLS 2016 technical documentation on how this scale is constructed and its properties) and the set of PVs for overall student reading achievement. Select the ASBGSSB (Students’ Sense of School Belonging) in the list of Background continuous variables and move it back to the list of Available variables using the left arrow button. From the list of Available variables locate the root of the overall reading plausible values (ASRREA). You can use the filter boxes at the top of the panel to search for it, either by name or label. Select the root and add it to the Plausible values panel using the arrow buttons. This section of the interface should look like this:

Note how the PVs are specified. The five PVs for the overall reading achievement are ASRREA01, ASRREA02, ASRREA03, ASRREA04, and ASRREA05. The lists of Available variables and Plausible values will not show the five separate PVs, but just their root/common name – ASRREA, without the numbers at the end. In the background, the function will take all the five PVs and include them in the computations. For more details on the PV roots (also for the PV roots for studies other than TIMSS and PIRLS and their additions), the computational routines involving PVS, see here.

Because we use the application with Correlations directly after performing the previous analysis, we still have the rest of the settings from the previous analysis done. There is no need to change any of the remaining settings, unless you want to. You could, though, change the output file name, otherwise it will be overwritten. Note that the displayed syntax will change, reflecting the removal of the ASBGSSB variable, inclusion of the root of the five PVs, ASRREA, for the overall reading achievement, to compute the correlations for:

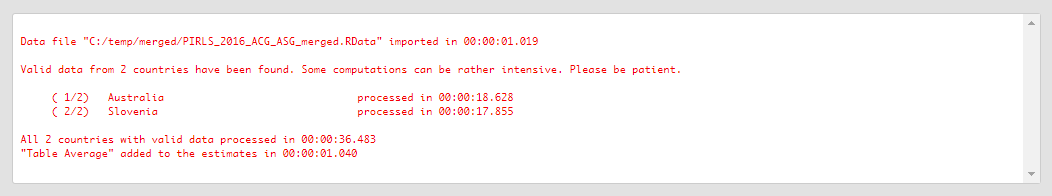

Press the Execute syntax button. The GUI console will update, logging all completed operations:

If the Open the output when done checkbox is ticked, the output will open automatically in the default spreadsheet program (usually MS Excel) when all computations are completed. As with the previous analyses, refer to the explanations on the structure of the workbook, its sheets and the columns here.